Quantum Computing (is our current encryption safe?)

I’ve recently done a deep dive into quantum computing after reading an article about reports claiming that researchers predict quantum computing could have the capacity to break the RSA encryption algorithm (one of the main encryption method’s in the digital landscape) in the next 5-15 years.

I’ve learned a lot through my research and found out there’s lots of confusion and the data is scattered everywhere. Finding all the necessary information to make an educated evaluation is laborious and still based primarily on theories and proof of concepts.

The acceptable model I’ve seen as most plausible, is a 4,099 logical qubits figure that comes from a serious analysis in 2003, by computer scientist Stéphane Beauregard in his paper, titled “Circuit for Shor’s algorithm using 2n+3 qubits.”

In an article by a quantum analyst Marin Ivezic, he goes on to say “Theoretically, just a few thousand perfect qubits could crack RSA-2048 overnight – practically, achieving those “perfect qubits” is a monumental task that no one has completed yet.”1

First let’s talk about what a Qubit is?

All computations involve inputing data, then getting a final answer. In classical mechanics or a classical computation we use a bit to represent data. In quantum mechanics or a quantum computation we call it a quantum bit or qubit. Both corresponds to one of two alternatives either a binary 0 or 1, while a qubit can exist in a superposition of both 0 and 1 states simultaneously, making it more powerful for certain complex calculations. Classical bits are deterministic and stable, while qubits are probabilistic until measured and are sensitive to environmental disturbance creating decoherence knocking it out of superposition.

Our classical view of computation with the bit has us accustomed to a deterministic value of either 0 or 1, while qubits exist in a probabilistic superposition of both 0 and 1 until measured. What this means is that classical randomness is based on the unpredictability of an algorithm often called pseudo-random number generators (PRNGs), which produce sequences that appear random but are based on a mathematical formula and can be reproduced by anyone who knows the algorithm and the seed or an example you may be more familiar with, the tossing a fair coin.

In Chris Bernhardt’s book, Quantum Computing For Everyone he goes on to tell us how classical randomness is not truly random,

Tossing a coin is something that is described by classical mechanics. It can be modeled using calculus. To compute whether the coins lands heads or tails up, you need first to carefully measure the initial conditions: the weight of the coin, the height above the ground, the force of the impact of the thumb on the coin, the exact location on the coin where the thumb hits, the position of the coin, and so forth and so on. Given all of these values exactly, the theory will tell us which way up the coin lands. There is no actual randomness involved. Tossing a coin seems random because each time we do it the initial conditions vary slightly. These slight variations can change the outcome from heads to tails and vice versa. There is no real randomness in classical mechanics, just what is often called sensitive dependence (Italics added)to initial conditions – a small change in the input can get amplified and produce an entirely different outcome. The underlying idea concerning randomness in quantum mechanics is different. The randomness is true randomness.2

Whereas quantum randomness is an inherent property of the system itself, especially when dealing with entanglement where one atoms energy state can become dependent on another. Quantum randomness is often considered “truly random” because its outcomes are governed by quantum mechanics, making them fundamentally unpredictable and more secure than classically generated “random” numbers, which can be predictable if the algorithm or seed key is known. It’s important to note that although a fair coin toss appears random, in classical mechanics the laws of physics are deterministic. If we could make measurements with precise accuracy, the randomness would disappear.

Below is some relevant terminology and encryption methods used under the hood on services we use daily like web browsers, email services, and VPNs. These services use it to establish secure connections used for digital signatures, online banking, and identity solutions. This adds distinct context between logical and physical qubits which is crucial for understanding how far away we are from actually factoring a RSA encrypted key.

Physical qubit

- The fundamental, actual hardware that can be prepared, the real-world quantum system that encodes information.

Logical qubit

- Error-corrected qubit. Qubits are susceptible to noise and errors from the environment, which can corrupt the quantum information. It takes multiple physical qubits to create a logical qubit.

Marin Ivezic tells us,

a single logical qubit might require on the order of 1,000 or more physical qubits in a surface code array. Gidney & Ekerå, for instance, modeled their 8-hour RSA-breaker with a surface-code architecture and found it would need on the order of 20 million physical qubits in total – about 20,000 logical qubits times roughly 1,000 physical qubits per logical. Similarly, Beauregard’s notional 4,099 logical qubits would translate to several million physical qubits if you actually built the error-correcting fabric for them.1

Encryption key sizes and their numerical decimal length,

RSA key, which is the product of two large primes.

- Common RSA Key:

- 2048-bit is a 600-digit decimal number: Considered a minimum for most applications today.

- 3072-bit or 4096-bit: Recommended for high-security applications. A 4096-bit key is a 1234-digit decimal number.

ECC key, a 256-bit ECC key is as secure as a 3072-bit RSA key

- Common ECC Key:

- A SHA-256 Hash (used in the Bitcoin network), is a 256-bit number, which is the standard output. This is equivalent to 32 bytes or 64 hexadecimal characters, and it represents 2^256 possible hash values.

Today’s most powerful quantum computer is 1,180 qubits in size by Atom Computing. Next IBM’s Candor at 1,121 then Google whose focus is on error correction with chips like “Willow” at 105 qubits, and Microsoft is developing a new kind of processor using topological qubits with its “Majorana 1” chip which has 8 qubits but is designed to scale to 1 million.

The question arises are these physical or logical qubits these companies are reporting?

I feel these numbers are referencing physical qubits.

As stated in an article by Quantum insider, “However, quantum computing faces a major challenge: qubits are highly sensitive to environmental noise. Small disturbances — such as electromagnetic waves, temperature changes, or even cosmic radiation — can cause errors in calculations. This fragility has made it difficult to scale quantum systems beyond a few hundred qubits while maintaining computational accuracy.” 3

This supports the notion that we need to have many physical qubits to obtain the error corrected Logical qubit.

Knowing this I lean toward these companies reporting their physical Qubit number for better optics to the public. We must also realize that all these companies are looking to raise investment from the market so a bigger number is better to a headline society.

Some current figures on the Top 5 large players;

- Atom Computing- 1,180 Qubits

- IBM’s Candor- 1,121 Qubits

- Google’s Willow- 105 Qubits

- Amazon Web Services Ocelot- 14 Qubits

- Microsoft Majorana 1- 8 Qubits

Google and IBM use a Superconducting circuit that operate at extremely low temperatures and are highly sensitive to environmental noise.

Atom Computing uses Neutral-atom qubits that uses individual, neutral atoms instead of charged ions or other systems. These qubits are controlled with lasers.

Microsofts Majorana 1 processor uses a new type of qubit called a topological qubit. This is a theoretical type of qubit. “Imagine a topological qubit as a knot in a rubber band. You can stretch or twist the rubber band, but the knot stays intact.”4 This stability is crucial because quantum computers are very sensitive to their environment. It’s important to note that this is only a claim and there’s not substantial proof for experts to agree. Microsoft claim’s it can scale to 1 million plus qubits by 2030.

Amazon Web Services (AWS) introduced Ocelot, “AWS claims that Ocelot can reduce the cost of implementing error correction by up to 90%, a key metric for making quantum computers more scalable and useful for real-world applications.3 They also released Ocelot using an architecture of 14 physical qubits including buffers and detection circuits. Specifically 5 of there cat qubits are stated being used in there published article in Nature5.

With these figures, In my option they’re describing physical qubits as we see with IBM’s Candor at 1,121 they likely need more physical qubits to get enough logical qubits for computation. AWS’s Ocelot uses 14 qubits they say are physical but use “cat qubits,” a type of qubit that intrinsically suppresses certain errors, essentially doing more with less.

Conclusion:

The Quantum race is underway and there’s lots of noise in this industry about the implication’s short and long term. A first principles approach tells me a lot of the individual companies data may be marketing hype to secure funding. This is just my view take it for what it’s worth. Analysis’s claim these computers if brought to commercial market can break current cryptographic encryption methods by 2030. There is a non 0 chance this can occur and many companies are already positioning for quantum-resistant methods. Only time will show us what really happens and with the current AI revolution underway the innovation timeline could get even faster and with real world potential in researching new drugs, financial modeling, aerospace, and cybersecurity the financial incentives are there. We should be prepared for unknown breakthroughs, better error-correction methods, or even a different quantum factoring algorithm that can change the timeline.

Keep the faith,

Resources:

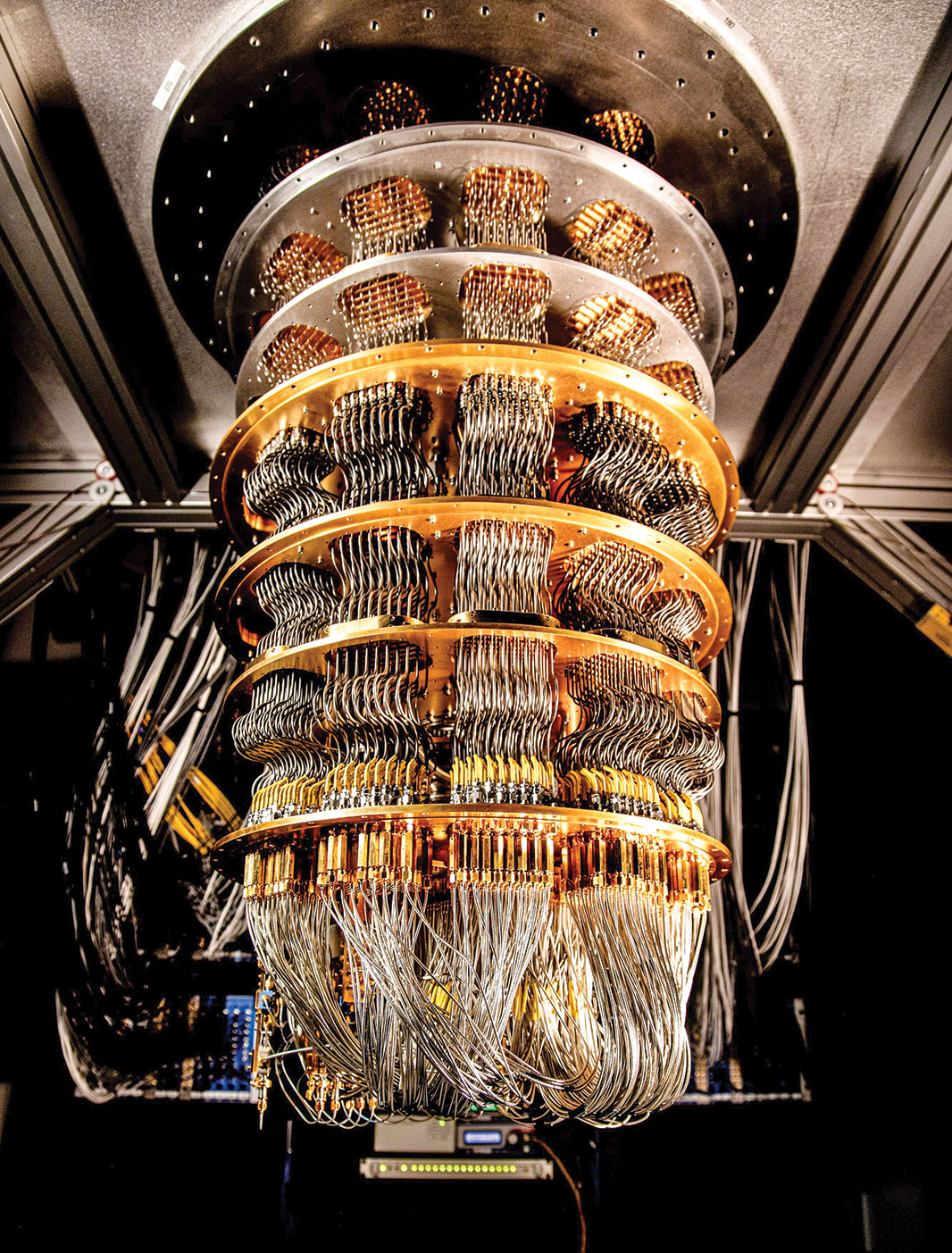

Featured Image: Google’s Quantum Chip, Image Source: Retrieved November 19th, 2025. from, https://www.science.org/content/article/quantum-computers-take-key-step-toward-curbing-errors.

1) Marin Ivezic. “4,099 Qubits: The Myth and Reality of Breaking RSA-2048 with Quantum Computers.” POSTQUANTUM, 5 Mar. 2024, https://postquantum.com/post-quantum/4099-qubits-rsa/.

2) Chris Bernhardt, Quantum Computing For Everyone (Massachusetts: MIT Press, 2020) pp. 10-11

3) Matt Swayne. “AWS Unveils Ocelot, Its First Quantum Computing Chip” The Quantum Insider, 27 Feb. 2025, https://thequantuminsider.com/2025/02/27/aws-unveils-ocelot-its-first-quantum-computing-chip/.

4) Editorial Desk, “Microsoft’s Majorana 1 Chip – All you need to know guide.” United States Artificial Intelligence Institute, 25 Feb. 2025, https://www.usaii.org/ai-insights/microsoft-majorana-1-chip-all-you-need-to-know-guide.

5) Putterman, H., Noh, K., Hann, C.T. et al. “Hardware-efficient quantum error correction via concatenated bosonic qubits.” Nature, Vol. 638, Feb. 2025, pp. 927–934. https://doi.org/10.1038/s41586-025-08642-7.

Further Study Resources:

Reading:

Stephane Beauregard. “Circuit for Shor’s algorithm using 2n+3 qubits.” Quantum Information and Computation, Vol. 3, No. 2, Jan. 2003, pp. 175-185. arXiv:quant-ph/0205095.

Craig Gidney, and Martin Ekerå. “How to factor 2048 bit RSA integers in 8 hours using 20 million noisy qubits.” Quantum, Vol. 5,Apr. 2021, pp. 433. https://doi.org/10.22331/q-2021-04-15-433.

Ian Khan, “Quantum Supremacy to Quantum Utility: How Error-Corrected Quantum Computers Will Transform Industries by 2030.” Blog, Ian Khan Blog, 13 Nov. 2025, https://www.iankhan.com/quantum-supremacy-to-quantum-utility-how-error-corrected-quantum-computers-will-transform-industries-by-2030/.

Podcasts:

Pysh, Preston “BTC253: QUANTUM COMPUTING AND BITCOIN W/ CHARLES EDWARDS.” Bitcoin Fundimentals, The Investors Podcast ,11 Nov. 2025, https://www.theinvestorspodcast.com/bitcoin-fundamentals/quantum-computing-and-bitcoin-w-charles-edwards/.

0 Comments